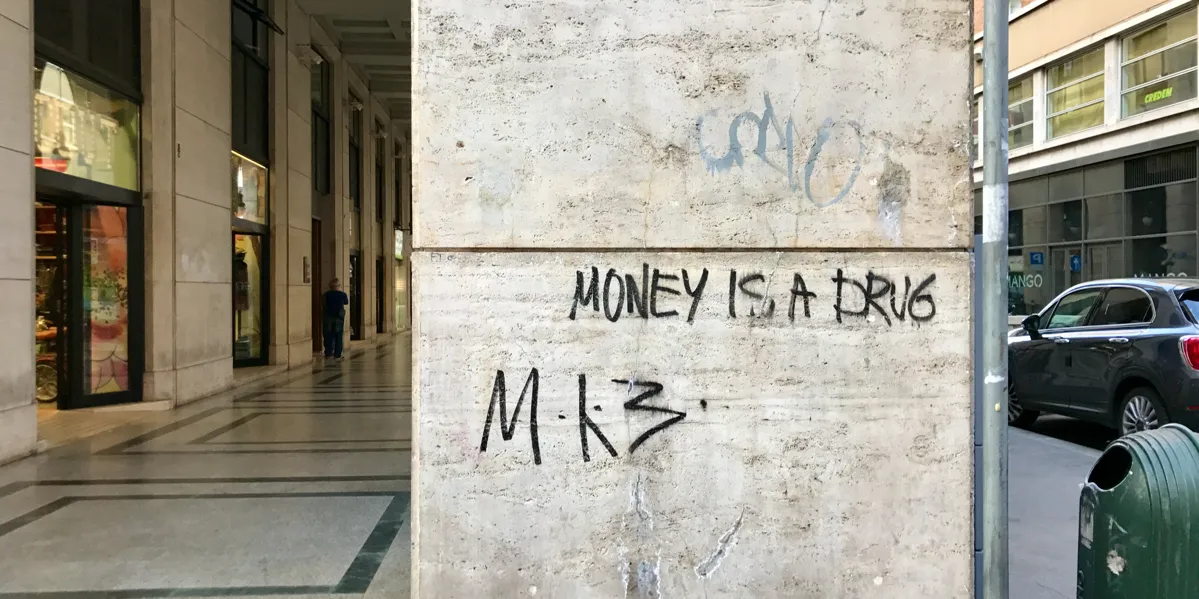

How a global professional services firm spent $50 million building an internal application on top of a platform that was supposed to save them money — and ended up held hostage by the thing they chose because it was "free."

What They Were Experiencing

A global professional services firm — one of the largest in the world — asked us to assess the DevOps practices on an internal compliance application. The app tracked tax obligations, managed documents, and handled workflow for thousands of clients across multiple countries. It was, in their words, a "table stakes" product — something regulatory requirements forced them to have. Not glamorous, but essential to winning and servicing client work.

The application had started life around 2010 as a modest $375,000 project. By the time we arrived in 2015, it had consumed approximately $50 million and at its peak had 70 to 80 people working on it. At the time of our assessment, the team was 40 to 50 people spread across the US, Spain, and India, organized into seven color-coded pods — each with a tech lead, developers, QA staff, and business analysts.

They had two major versions running simultaneously: version 4, which was in production serving around 12,000 clients, and version 5, which was supposed to replace it but was barely being used by a handful of internal users. Version 5 had been meant to go live months earlier. It hadn't.

Leadership was frustrated. Releases were painful. Deployments took entire weekends. The upgrade path from version 4 to version 5 was, as one stakeholder put it, "underpants gnomes" — step one: have two versions, step three: everyone's on the new version, step two: unclear. A previous informal assessment had already flagged problems, and nothing had meaningfully changed.

They thought they needed help with their DevOps pipeline. What they actually needed was someone to tell them that their platform choice had become a prison.

What They Thought Was Wrong

Leadership believed the core problems were:

- Their build and deploy pipeline needed automation

- Their Agile practices needed maturing

- The teams needed to release more frequently with less pain

- Stakeholder communication needed improvement

All of these were true. But they were symptoms, not causes. The real problem was architectural, and it had been baked in from the very first decision.

What I Actually Found

My colleague and I spent multiple days on-site interviewing tech leads, developers, QA staff, business analysts, the engagement lead, the product stakeholder, and the director overseeing the effort. We talked to people in the US, got perspectives from the offshore teams, and traced the path of a code change from developer check-in to production deployment. Here's what emerged.

Finding #1: SharePoint Was King of the Hill — And That Was the Core Problem

The application had been built on top of Microsoft SharePoint. The original rationale was seductive: SharePoint gives you so much "for free." Navigation menus, document management, authentication, site structure — all out of the box. Why build what you can get for nothing?

The problem was that "free" had become the most expensive decision in the project's history.

When you build your application inside SharePoint, SharePoint becomes the king of your architecture. Every decision — how you deploy, how you test, how you handle security, how you structure your screens, how you manage your data — has to answer first to SharePoint's needs and eccentricities, and second to your actual business requirements. Your application doesn't use SharePoint as a service. Your application is an extension of SharePoint. And SharePoint's needs come first.

This meant the firm was essentially running a $50 million custom compliance application as a guest inside someone else's platform. Every deployment had to work around SharePoint's deployment model. Every cumulative update from Microsoft triggered a full regression test that took four to five days — and almost always broke something. Every architectural decision had to ask "how does SharePoint feel about this?" before asking "what does the business need?"

One team member captured it perfectly in a meeting: "We're trying to put 70% of our logic into someplace it won't fit."

The navigation and menus that SharePoint provided "for free"? Those features would have taken a competent development team a few weeks to build from scratch. Instead, the firm had spent years and millions working around the constraints of getting those features for free.

Finding #2: The Security Model Was a Ticking Time Bomb

The application had complex authorization requirements driven by the nature of global tax compliance. Which users could see which clients, in which jurisdictions, with what level of access — these weren't simple role-based permissions. They were deeply contextual and specific to the firm's business.

The teams had bent over backward to implement this authorization model within SharePoint's security framework. SharePoint's security model is designed to be general-purpose — good enough for most customers in most situations. This application's security requirements were anything but general-purpose.

The current implementation meant that when (not if) the firm needed to change how authorization worked — say, adding a new jurisdictional rule or restructuring which partners could see which clients — the first question would be "can SharePoint do this?" rather than "what does the business need?" And there would almost certainly come a day when the answer to that first question was no.

Authorization should have been owned by the application and driven by business needs. Instead, it was owned by SharePoint and constrained by SharePoint's assumptions about how security should work. The application was dragging around SharePoint's security implementation like a ball and chain.

Finding #3: Deploying Was an Ordeal That Punished Everyone

The deployment process was genuinely painful, and the pain radiated outward to touch every team.

For version 4, a production deployment took about a day and required taking the entire system offline — which meant it could only happen on weekends. A human had to manually edit a PowerShell startup script to specify which deployment steps should actually run. The version 4 codebase had accumulated over 1,000 SharePoint Solution files, each one a potential point of failure during deployment.

For version 5, compilation alone took 24 minutes across 200+ Visual Studio projects — a number that was only going to grow. The deployment scripts took approximately 40 minutes per environment and executed blindly without any verification that the operations actually succeeded. When we asked why the scripts didn't stop on failure, the answer was disarmingly honest: "Because then we'd have to write that code." They deployed infrequently enough that nobody had bothered to add error handling — and the lack of error handling was one of the reasons they deployed infrequently. A perfect anti-DevOps feedback loop.

But the real deployment nightmare was what happened to the QA team. QA didn't have permission to deploy to their own test environment. To get a new build into QA, someone had to spend 45 to 60 minutes filling out a deployment document — a template specifying what changed, what was fixed, and which components needed updating. That document was sent to a separate operations team, who sometimes rejected it and sent it back for rework. Even when accepted, the operations team might not prioritize it immediately because they were supporting other applications too. The actual deployment then took anywhere from 10 minutes to 4 hours depending on complexity.

The QA team told us flatly that these deployment activities were a distraction from their actual testing work. The friction was so high that it incentivized everyone to deploy less often and test less often — the exact opposite of what a healthy delivery pipeline should do.

Finding #4: Seven Teams, Three Countries, No Real Product Ownership

The application had seven development pods, a QA organization, business analysts, an engagement lead, and a product stakeholder. It had an architecture review council that required teams to produce Candidate Architecture Documents and Decision Architecture Documents before implementing significant changes. It had multiple meeting cadences — twice-weekly issue meetings, twice-weekly BA meetings, separate dev lead meetings.

What it didn't have was a genuine product owner with real authority.

The person closest to the product owner role functioned more as a liaison between the business and the development teams — a go-between rather than a decision-maker. He'd lost faith in the team's estimates, but the teams kept estimating anyway. Prioritization, as far as we could tell, was driven by whoever lobbied hardest or shouted loudest. One interviewee confirmed this more diplomatically than I'm stating it, but the pattern was unmistakable.

The economics of the project weren't well understood by the team. Millions of dollars had gone into a product described as low-revenue "table stakes." The fundamental product ownership question — "how do we create this necessary product with the lowest total cost of ownership?" — had been replaced by traditional project management thinking: "how do we get these features done by this date?" Those are very different questions, and they lead to very different decisions.

Finding #5: Architecture Documents Nobody Verified

The architecture review process was elaborate. Teams wrote CADs (Candidate Architecture Documents) describing the problem and proposed solution. They presented SWOT analyses to an architecture review council — one representative from architecture, one from security, one from infrastructure. Approved designs were recorded in DADs (Decision Architecture Documents).

When we asked "how confident are you that what gets implemented actually matches the DADs?", the answer was essentially: not very confident. Code reviews happened sometimes but not consistently, and they weren't tied to the deployment process. The teams were spending significant effort documenting their architectural intentions and lobbying for approval, but nobody was checking whether reality matched the blueprints.

This was an enormous waste of time and effort — governance theater that created the appearance of architectural discipline without the substance. Worse, it probably stifled innovation, because teams had to navigate a bureaucratic approval process for changes that nobody would verify anyway.

Finding #6: A Tangled Web of Legacy Systems

The compliance application wasn't actually one application. It was a constellation of interconnected systems, each with its own history, technology stack, and hosting location.

The core business logic — obligation management, rules engines, due date tracking — lived in a separate system hosted in the UK, built in classic ASP with a rules engine written in VB6. The compliance application integrated with this system through a complex web of synchronization processes and iFrame embedding. A third system provided reporting dashboards with a Windows 8 Metro-style interface. And underneath all of it, SharePoint tried to be the glue holding everything together.

Data was spread across jurisdictions with genuine legal implications. The UK system held certain data. The US SharePoint servers held other data. An index server in the US was used to locate data across the distributed system, which raised questions about whether it complied with international data regulations. Security tokens could get out of sync between systems. Entity ID mappings between systems were managed in yet another layer.

When I asked whether this was a multi-tenant system, the team had trouble answering — which told me more than any definitive answer could have. If the people building and maintaining the system can't clearly articulate whether customer data is shared or isolated, that ambiguity is causing deployment problems, security confusion, and architectural drift.

Finding #7: The DevOps Team Was an Anti-Pattern

The project had a dedicated DevOps team. That sounds like it should be a good thing. It wasn't.

The DevOps team owned the deployment scripts and the deployment process. The development teams had almost no knowledge of how their code actually got deployed to production. When a team needed to deploy, they contacted the DevOps team, who handled it. The developers were producing code. A separate group was responsible for getting that code to customers. The gap between those two activities was enormous.

This is the exact anti-pattern that the DevOps movement was created to fix. The entire philosophy of DevOps is to bring development and operations as close together as possible. Having a separate team that owns all the deployment knowledge and all the deployment permissions creates a bottleneck, removes accountability from the development teams, and ensures that deployment is always someone else's problem.

Meanwhile, the developers couldn't even create their own source control branches. When we asked a developer "can you create a branch?", the answer was simply "no." An offshore team in India handled all branching and merging. The developers were writing code they couldn't branch, building features they couldn't deploy, and testing against environments they couldn't provision.

Finding #8: Unplanned Work Was Invisible

The teams didn't track fire drills or unplanned work. When emergencies came up — and they came up regularly, driven by production support issues — nobody logged them. Sprint retrospectives relied on memory, which meant the team was systematically forgetting the interruptions that were fragmenting their capacity.

Without visibility into unplanned work, you can't find the patterns. You can't ask "why do we keep getting emergency requests about the same subsystem?" or "how much of our sprint capacity is actually going to fires versus planned features?" You can't make a rational argument to leadership about the cost of interruptions because you have no data. The teams were experiencing the pain of constant context-switching but had no evidence to point to when explaining why everything took longer than estimated.

The Diagnosis

I told them that this project had a software architecture problem, not a DevOps problem. While there was room for improvement in their DevOps practices, the amount of improvement would be severely limited by the architecture. In short: the problem was SharePoint.

Until the application was rescued from the tyranny of the platform it was built inside, there would be no big, significant opportunities to improve the delivery pipeline. You can optimize the deployment scripts all you want, but if every deployment has to genuflect to SharePoint's requirements before addressing the business's requirements, you're optimizing inside a cage.

The features they'd gotten "for free" — menus, navigation, document management, authentication — were costing them millions in deployment complexity, testing overhead, architectural constraints, and lost agility. Every Microsoft patch triggered days of regression testing. Every new business requirement had to be filtered through "can SharePoint do this?" Security was implemented to serve SharePoint's model rather than the business's model. The platform that was supposed to accelerate them had become their heaviest anchor.

Beyond the architecture, the project was suffering from a more fundamental absence: real product ownership with real economic thinking. Nobody was asking "what is the lowest total cost of ownership for this capability?" They were asking "how do we hit the next release date?" — and answering that question by throwing more people at a platform that fought them every step of the way.

What We Recommended

We deliberately sequenced the recommendations to build on each other, starting with the foundations.

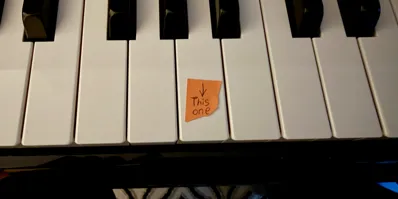

Acknowledge that this is an architecture problem first. Stop treating symptoms. The platform choice was the constraint. Every other improvement would be limited until the application was extricated from inside SharePoint and repositioned as the "king of the hill" — consuming SharePoint as a service where genuinely needed, rather than living inside it.

Identify which SharePoint features are truly needed — and start planning to own everything else. Menus and navigation? Build them. Security? Own it with a purpose-built authorization framework. Document management? Maybe keep SharePoint for that, but as a service the application calls, not a platform the application lives inside.

Focus on Scrum before DevOps. Teams with good DevOps practices are always teams with mature Agile practices. You rarely see the reverse. Proper Scrum adoption would force the issue of DevOps because teams would want and need automated build/test/deploy in order to actually finish work every sprint. It would also eliminate the pattern of treating sprints as mini-waterfalls.

Dissolve the separate DevOps team. Distribute deployment knowledge and permissions to every development pod. The current DevOps team should become mentors, not gatekeepers. Every developer should understand how the application is deployed and have the skills to write and maintain deployment scripts. Make deployment scripts part of the Definition of Done.

Give QA teams the power to deploy to their own environments — immediately, without forms, without waiting for a separate team. The current process was a 45-to-60-minute tax on every test cycle that actively discouraged testing.

Establish genuine product ownership. Someone needed the authority and the mindset to make economic decisions about the product — not just feature prioritization by volume, but actual cost-of-ownership thinking that would question platform choices, team size, and architectural direction.

Start tracking unplanned work. Every fire drill, every emergency, every context switch — log it. Without data, the teams couldn't learn from their retrospectives and leadership couldn't see the true cost of the interruptions they were generating.

Stop doing architecture governance theater. Either verify that implementations match the architectural documents — through systematic code reviews tied to the deployment process — or stop requiring the documents. The current approach was the worst of both worlds: all the bureaucratic overhead with none of the quality assurance.

The Lesson

This was a $50 million lesson in what "free" actually costs.

Every platform decision involves a trade-off between speed-to-start and long-term maintainability. Platforms that help you "go fast" and deliver a lot of functionality quickly carry the risk of robbing you of flexibility in the long run. SharePoint, content management systems, low-code platforms, all-in-one frameworks — they can be the right choice when the application is small, simple, and unlikely to evolve in complex ways. But the moment your application's needs diverge from the platform's assumptions — and for any enterprise application, that moment will come — you start paying compound interest on the initial convenience.

This firm didn't make one catastrophic decision. They made a reasonable decision in 2010, and then spent five years accommodating the consequences of that decision rather than confronting it. Each accommodation was individually rational: work around the deployment constraints, bend the security model, add more people, create more process. The accumulation of those accommodations created a system where 40 to 50 smart, capable people were working incredibly hard and delivering far less than they should have been — not because they weren't good at their jobs, but because the architecture was working against them at every turn.

The hardest recommendation wasn't technical. It was asking leadership to admit that the foundational technology choice — the one that had seemed so smart because it was "free" — was the thing that needed to change. Nobody likes hearing that the road they've been paving for five years goes to the wrong destination. But sometimes the most expensive thing you can do is keep building on a foundation that's fighting you.

If your team spends more time working around the platform than working on the product — if every deployment feels like surgery — if your architecture forces you to ask "can the tool do this?" before asking "what does the business need?" — the problem might not be your process. It might be the foundation you built on.

That's the kind of problem I help companies see clearly.